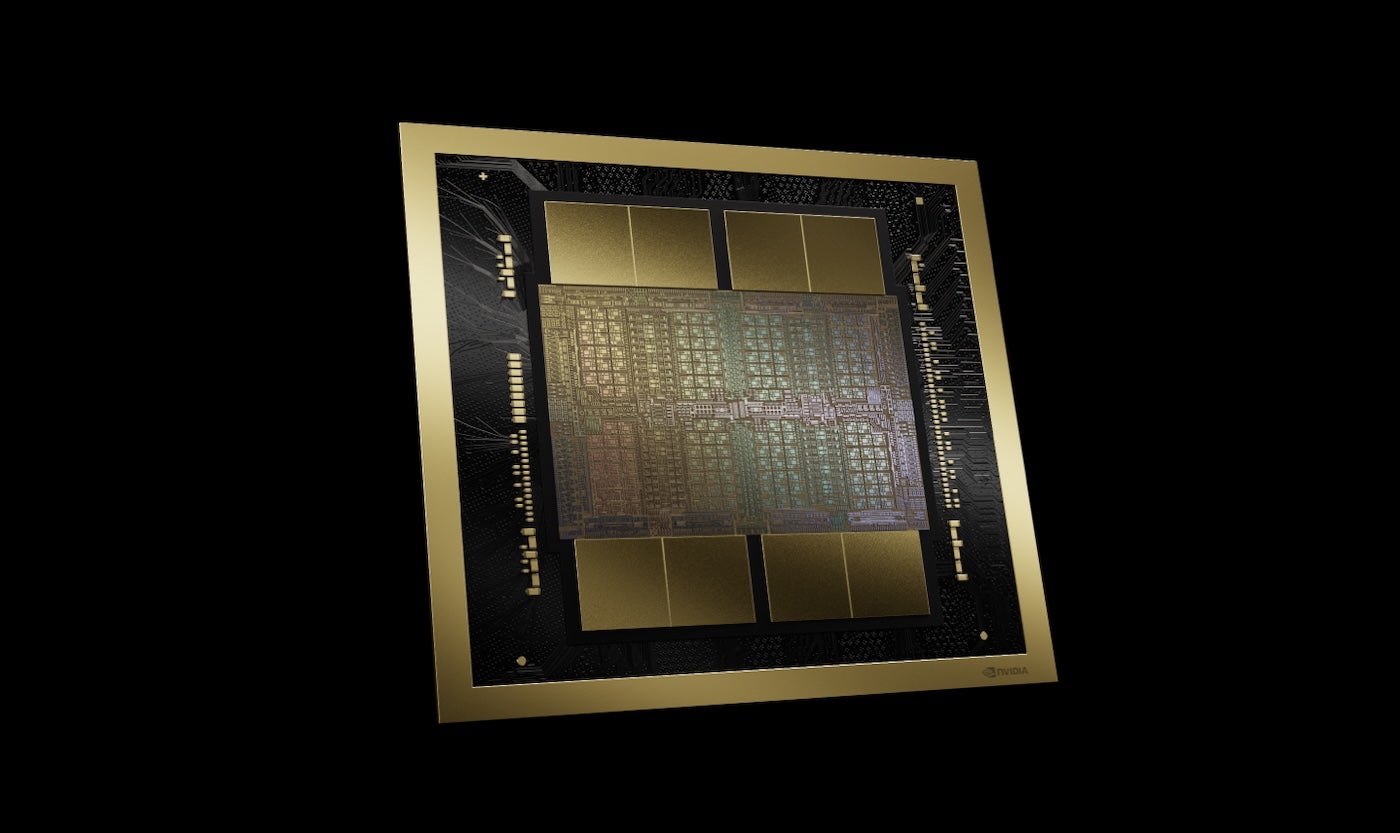

NVIDIA’s newest GPU platform is the Blackwell (Figure A), which companies including AWS, Microsoft and Google plan to adopt for generative AI and other modern computing tasks, NVIDIA CEO Jensen Huang announced during the keynote at the NVIDIA GTC conference on March 18 in San Jose, California.

Figure A

Blackwell-based products will enter the market from NVIDIA partners worldwide in late 2024. Huang announced a long lineup of additional technologies and services from NVIDIA and its partners, speaking of generative AI as just one facet of accelerated computing.

“When you become accelerated, your infrastructure is CUDA GPUs,” Huang said, referring to CUDA, NVIDIA’s parallel computing platform and programming model. “And when that happens, it’s the same infrastructure as for generative AI.”

Blackwell enables large language model training and inference

The Blackwell GPU platform contains two dies connected by a 10 terabytes per second chip-to-chip interconnect, meaning each side can work essentially as if “the two dies think it’s one chip,” Huang said. It has 208 billion transistors and is manufactured using NVIDIA’s 208 billion 4NP TSMC process. It boasts 8 TB/S memory bandwidth and 20 pentaFLOPS of AI performance.

For enterprise, this means Blackwell can perform training and inference for AI models scaling up to 10 trillion parameters, NVIDIA said.

Blackwell is enhanced by the following technologies:

- The second generation of the TensorRT-LLM and NeMo Megatron, both from NVIDIA.

- Frameworks for double the compute and model sizes compared to the first generation transformer engine.

- Confidential computing with native interface encryption protocols for privacy and security.

- A dedicated decompression engine for accelerating database queries in data analytics and data science.

Regarding security, Huang said the reliability engine “does a self test, an in-system test, of every bit of memory on the Blackwell chip and all the memory attached to it. It’s as if we shipped the Blackwell chip with its own tester.”

Blackwell-based products will be available from partner cloud service providers, NVIDIA Cloud Partner program companies and select sovereign clouds.

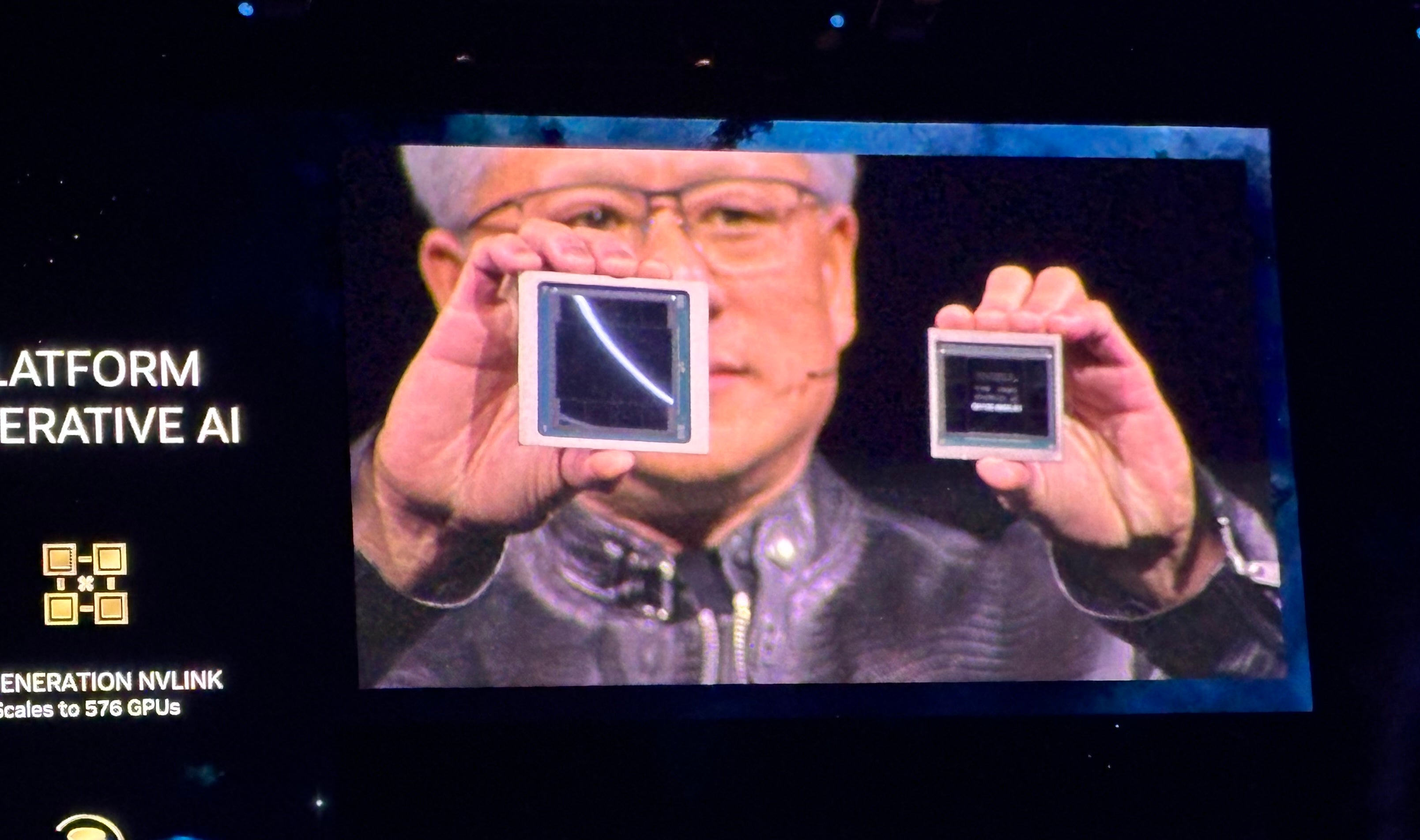

The Blackwell line of GPUs follows the Grace Hopper line of GPUs, which debuted in 2022 (Figure B). NVIDIA says Blackwell will run real-time generative AI on trillion-parameter LLMs at 25x less cost and less energy consumption than the Hopper line.

Figure B

NVIDIA GB200 Grace Blackwell Superchip connects multiple Blackwell GPUs

Along with the Blackwell GPUs, the company announced the NVIDIA GB200 Grace Blackwell Superchip, which links two NVIDIA B200 Tensor Core GPUs to the NVIDIA Grace CPU – providing a new, combined platform for LLM inference. The NVIDIA GB200 Grace Blackwell Superchip can be linked with the company’s newly-announced NVIDIA Quantum-X800 InfiniBand and Spectrum-X800 Ethernet platforms for speeds up to 800 GB/S.

The GB200 will be available on NVIDIA DGX Cloud and through AWS, Google Cloud and Oracle Cloud Infrastructure instances later this year.

New server design looks ahead to trillion-parameter AI models

The GB200 is one component of the newly announced GB200 NVL72, a rack-scale server design that packages together 36 Grace CPUs and 72 Blackwell GPUs for 1.8 exaFLOPs of AI performance. NVIDIA is looking ahead to possible use cases for massive, trillion-parameter LLMs, including persistent memory of conversations, complex scientific applications and multimodal models.

The GB200 NVL72 combines the fifth-generation of NVLink connectors (5,000 NVLink cables) and the GB200 Grace Blackwell Superchip for a massive amount of compute power Huang calls “an exoflops AI system in one single rack.”

“That is more than the average bandwidth of the internet … we could basically send everything to everybody,” Huang said.

“Our goal is to continually drive down the cost and energy – they’re directly correlated with each other – of the computing,” Huang said.

Cooling the GB200 NVL72 requires two liters of water per second.

The next generation of NVLink brings accelerated data center architecture

The fifth-generation of NVLink provides 1.8TB/s bidirectional throughput per GPU communication among up to 576 GPUs. This iteration of NVLink is intended to be used for the most powerful complex LLMs available today.

“In the future, data centers are going to be thought of as an AI factory,” Huang said.

Introducing the NVIDIA Inference Microservices

Another element of the possible “AI factory” is the NVIDIA Inference Microservice, or NIM, which Huang described as “a new way for you to receive and package software.”

The NIMs, which NVIDIA uses internally, are containers with which to train and deploy generative AI. NIMs let developers use APIs, NVIDIA CUDA and Kubernetes in one package.

SEE: Python remains the most popular programming language according to the TIOBE Index. (TechRepublic)

Instead of writing code to program an AI, Huang said, developers can “assemble a team of AIs” that work on the process inside the NIM.

“We want to build chatbots – AI copilots – that work alongside our designers,” Huang said.

NIMs are available starting March 18. Developers can experiment with NIMs for no charge and run them through a NVIDIA AI Enterprise 5.0 subscription.

Other major announcements from NVIDIA at GTC 2024

Huang announced a wide range of new products and services across accelerated computing and generative AI during the NVIDIA GTC 2024 keynote.

NVIDIA announced cuPQC, a library used to accelerate post-quantum cryptography. Developers working on post-quantum cryptography can reach out to NVIDIA for updates about availability.

NVIDIA’s X800 series of network switches accelerates AI infrastructure. Specifically, the X800 series contains the NVIDIA Quantum-X800 InfiniBand or NVIDIA Spectrum-X800 Ethernet switches, the NVIDIA Quantum Q3400 switch and the NVIDIA ConnectXR-8 SuperNIC. The X800 switches will be available in 2025.

Major partnerships detailed during the NVIDIA’s keynote include:

- NVIDIA’s full-stack AI platform will be on Oracle’s Enterprise AI starting March 18.

- AWS will provide access to NVIDIA Grace Blackwell GPU-based Amazon EC2 instances and NVIDIA DGX Cloud with Blackwell security.

- NVIDIA will accelerate Google Cloud with the NVIDIA Grace Blackwell AI computing platform and the NVIDIA DGX Cloud service, coming to Google Cloud. Google has not yet confirmed an availability date, although it is likely to be late 2024. In addition, the NVIDIA H100-powered DGX Cloud platform is generally available on Google Cloud as of March 18.

- Oracle will use the NVIDIA Grace Blackwell in its OCI Supercluster, OCI Compute and NVIDIA DGX Cloud on Oracle Cloud Infrastructure. Some combined Oracle-NVIDIA sovereign AI services are available as of March 18.

- Microsoft will adopt the NVIDIA Grace Blackwell Superchip to accelerate Azure. Availability can be expected later in 2024.

- Dell will use NVIDIA’s AI infrastructure and software suite to create Dell AI Factory, an end-to-end AI enterprise solution, available as of March 18 through traditional channels and Dell APEX. At an undisclosed time in the future, Dell will use the NVIDIA Grace Blackwell Superchip as the basis for a rack scale, high-density, liquid-cooled architecture. The Superchip will be compatible with Dell’s PowerEdge servers.

- SAP will add NVIDIA retrieval-augmented generation capabilities into its Joule copilot. Plus, SAP will use NVIDIA NIMs and other joint services.

“The whole industry is gearing up for Blackwell,” Huang said.

Competitors to NVIDIA’s AI chips

NVIDIA competes primarily with AMD and Intel in regards to providing enterprise AI. Qualcomm, SambaNova, Groq and a wide variety of cloud service providers play in the same space regarding generative AI inference and training.

AWS has its proprietary inference and training platforms: Inferentia and Trainium. As well as partnering with NVIDIA on a wide variety of products, Microsoft has its own AI training and inference chip: the Maia 100 AI Accelerator in Azure.

Disclaimer: NVIDIA paid for my airfare, accommodations and some meals for the NVIDIA GTC event held March 18 – 21 in San Jose, California.